Public

Hackathon landscape analysis

Python pipeline that scraped, structured, clustered, and scored 4496 projects from the Gemini API Developer Competition on Devpost. Findings published as a LinkedIn post.

TL;DR

- Multi-stage pipeline: scrape → structure → cluster (TF-IDF + KMeans) → score → AI narrative (Claude).

- 4496 projects analyzed; 4292 scored (95.5% success rate).

- Key finding: Developer Tools and Health scored highest; Media/Creative most crowded and least differentiated.

- Findings published as a LinkedIn post; analysis also informed product positioning for a later project.

Reusable patterns

- Multi-stage pipeline with raw artifact preservation: each stage is resumable and independently debuggable.

- TF-IDF + KMeans as fast baseline for domain clustering on short text (project descriptions).

- Custom scoring rubric (innovation, impact, scalability) as a proxy for "interesting" before reading full docs.

- State tracking (meta.json) for resumable scraping: restart a long run without re-fetching already-scraped pages.

- Claude API for narrative deepening: LLM adds qualitative insight on top of quantitative cluster outputs.

Context

The Gemini API Developer Competition on Devpost received 4496 project submissions — too many to read manually.

Goal: understand the landscape systematically — dominant domains, under-served areas, technology signals, and award candidates.

Decisions

- Multi-stage pipeline (fetch → structure → analyze → deepen): each stage writes outputs to disk before the next one starts — failures are cheap and runs are resumable.

- Retry + jitter in the scraper (6 attempts, 0.7s ± 0.15s delays): steady throughput without triggering rate limits.

- TF-IDF + KMeans for unsupervised clustering: fast, interpretable, no labeled data needed.

- Custom scoring rubric: weighted composite of innovation, impact, and scalability signals extracted from project text.

- Claude API for Phase 2 deepening: the quantitative pipeline surfaces structure; Claude adds narrative and qualitative context.

Architecture

- fetch: scrapes gallery index pages + project detail pages with retry, jitter, and raw HTML preservation.

- structure: normalizes JSONL into master table, extracts tech tags, links (repo/demo/video), team members.

- analyze: TF-IDF + KMeans (18 clusters), domain rule classification, composite scoring, award candidate nomination.

- deepen (Phase 2): Claude API generates narrative summaries on top of quantitative clusters.

- All intermediate outputs written to disk (dump/, structured/, analysis/) — pipeline is fully resumable at any stage.

Outcome

- 4292 of 4496 projects scored (95.5% success rate).

- Key finding: Developer Tools (avg score 55.9) and Health & Wellness (55.6) were the highest-quality domains; Media/Creative Tools (48.5% of all submissions, avg 46.6) was the most crowded and least differentiated.

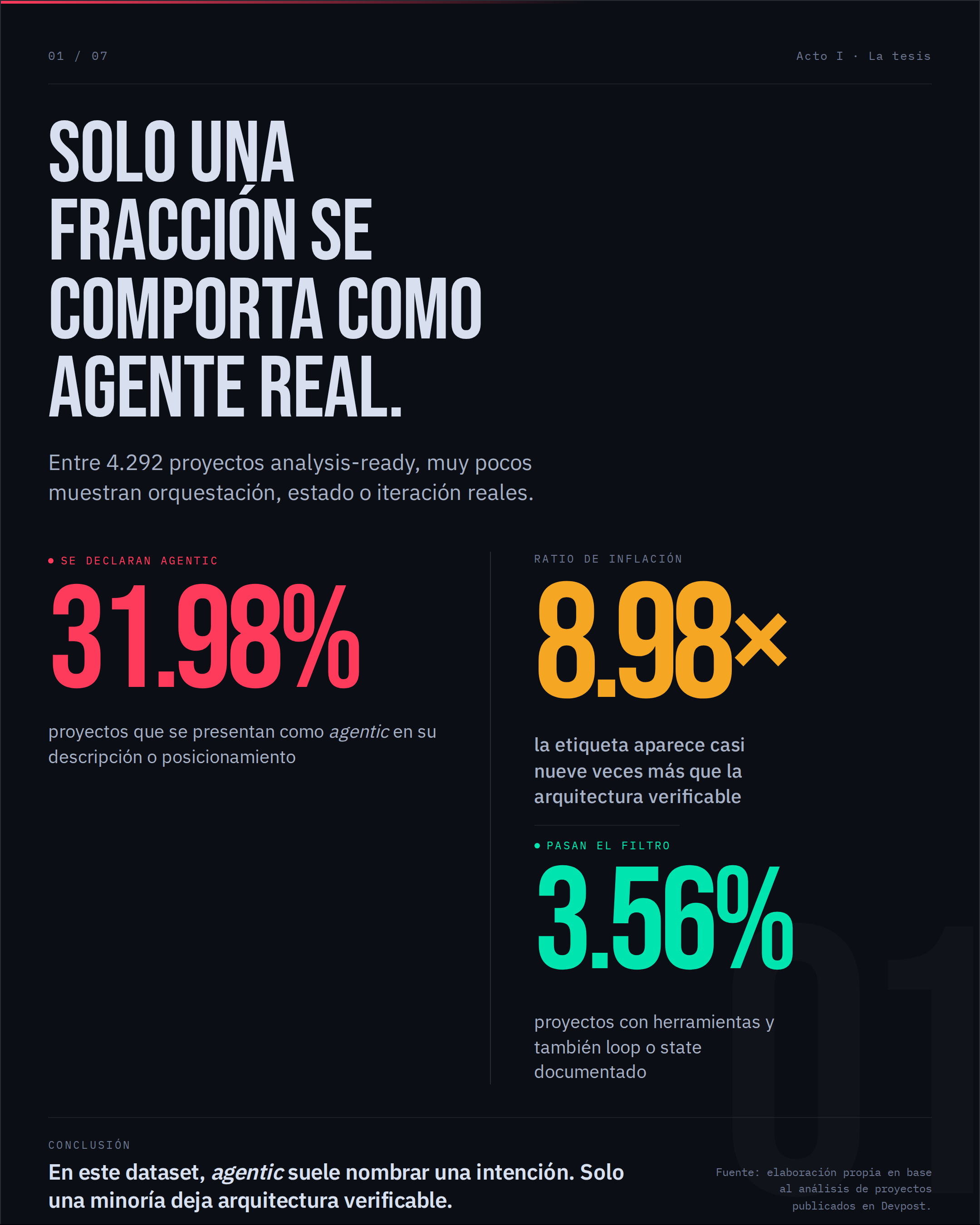

- 31.98% of projects agentic; 32.25% multimodal — identified as emerging capability clusters worth tracking.

- Findings published as a LinkedIn post; analysis also used for competitive positioning in a later product.